What ChatGPT Just Told Me About Credibility

I asked AI what makes a credible newsletter in my category. It answered with a five-point rubric. Here's what it told me — and what I'm building next.

ChatGPT didn’t name MoonInMental when I asked which aromatherapy newsletter is most credible for practitioners.

It named IFPA’s In Essence. NAHA’s Aromatherapy Journal. IJPHA.

That should have been a fail.

It was the most useful query of the entire retest.

WHAT I TESTED

Six queries. ChatGPT only. Free tier. Separate chat for every query.

Same methodology I now teach in the AI Visibility Walkthrough — because batched-chat sessions produce contaminated data and I learned that the hard way in March.

The queries weren’t the obvious ones.

I picked phrasing a practitioner evaluating MoonInMental as a peer would actually type:

trauma-informed aromatherapy Substack

what newsletter covers evidence-based aromatherapy with clinical research

are there any aromatherapy substacks that cite peer-reviewed studies

who writes about astrology and aromatherapy together with a clinical lens

is anyone doing evidence-based aromatherapy for nervous system regulation on Substack

what’s the most credible aromatherapy newsletter for practitioners

Six queries. Score: 14 out of 54.

THE TWO HITS

The first hit was trauma-informed aromatherapy Substack.

ChatGPT named MoonInMental first. Called it the closest direct match. Quoted the positioning verbatim.

Score: 8 out of 9.

The second hit was is anyone doing evidence-based aromatherapy for nervous system regulation on Substack.

MoonInMental again named first. Same positioning language quoted. Score: 6 out of 9.

Both hits used the brand’s actual positioning vocabulary. Trauma-informed. Evidence-based. Nervous system regulation.

The exact phrases the canonical bio uses. The exact phrases the schema uses. The exact phrases the directory listings use.

That’s not coincidence. That’s the entity binding holding.

THE FOUR MISSES

The four misses were all on authority-evaluation queries.

Most credible. Peer-reviewed. Clinical research. Clinical lens.

These aren’t asking AI what exists. They’re asking AI to rank.

ChatGPT named Patricia Davis. Sue J Morris. Jane Buckle. Tisserand Institute. IFPA. NAHA. IJPHA.

None of them are wrong answers. They’re the standard authority canon for the field.

MoonInMental wasn’t in the canon yet.

That’s not a failure. That’s a finding.

THE QUERY THAT GAVE AWAY THE ANSWER

The sixth query was the gift.

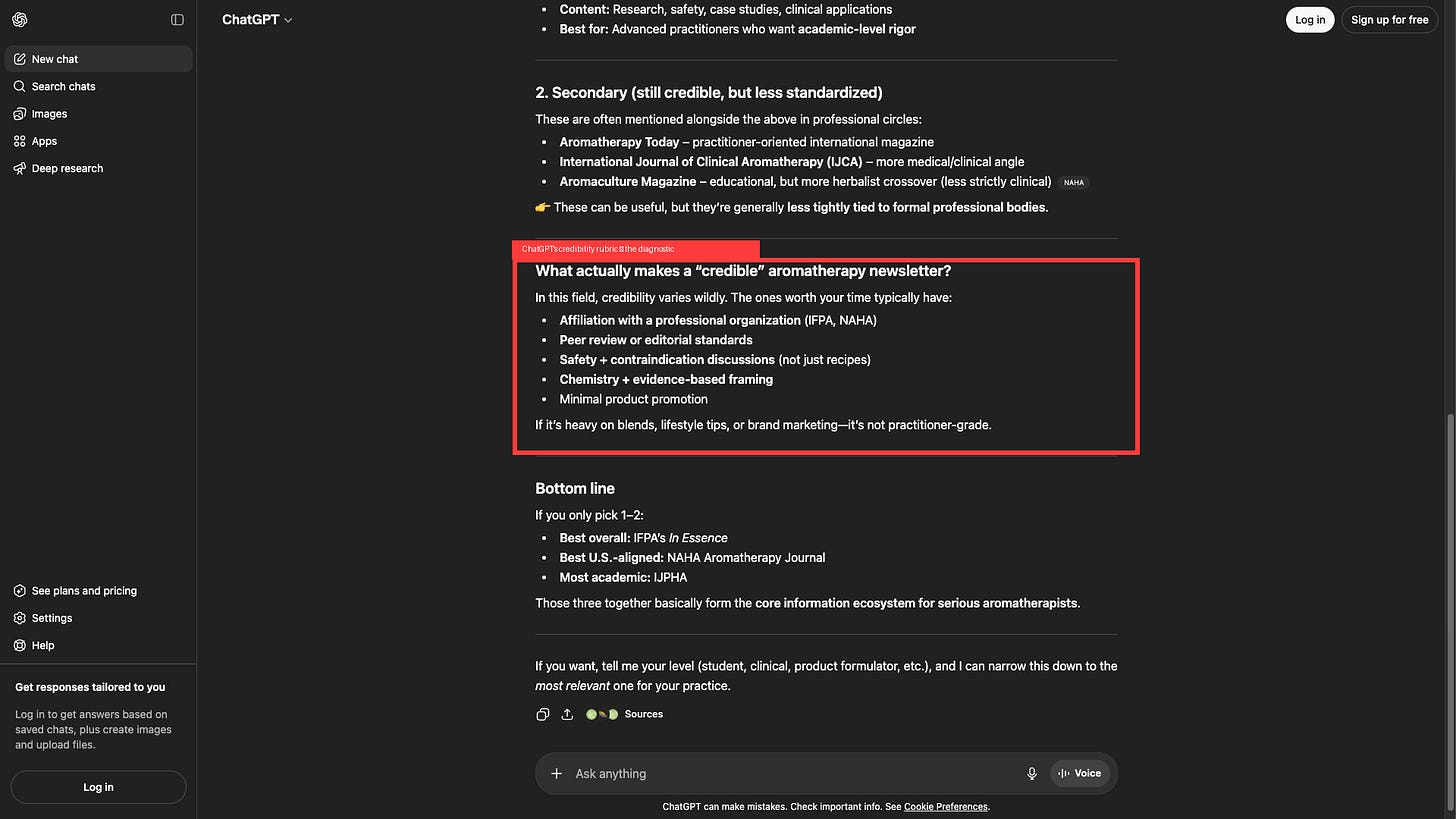

I asked ChatGPT what makes an aromatherapy newsletter credible. It told me. Five criteria, in order:

Affiliation with a professional organization (IFPA, NAHA)

Peer review or editorial standards

Safety and contraindication discussions — not just recipes

Chemistry and evidence-based framing

Minimal product promotion

That’s the rubric.

AI just told me — directly, in machine-readable text — exactly what would move a publication onto its “most credible” list.

I checked MoonInMental against it.

CriterionMoonInMental statusProfessional org affiliation✓ NAHA + AIA memberPeer review / editorial standards✗ Not documented publiclySafety + contraindication discussions✓ Every weekly post includes contraindicationsChemistry + evidence-based framing✓ Every blend cites mechanismMinimal product promotion✗ Custom Transit Alignment + Custom Blend in every post

Three of five.

The two gaps are mechanical, not philosophical.

WHAT CHANGES NEXT

I’m building a public-facing editorial standards page on the MoonInMental Carrd landing.

Methodology overview. Citation hierarchy. Mechanism-vetting protocol. Contraindication standards. Sources.

That closes gap two.

I’m restructuring the product CTAs.

Three to five evergreen cornerstone posts get every product CTA stripped. Pure clinical content. No asks.

The Custom Transit Alignment is moving to a paid-tier subscription instead of being pitched in every weekly free post.

A new product is being scoped — a Whole-House Accord blend formulated from full natal chart plus asteroids plus Human Design. Three-layer accord. Designed for everyday baseline support, not transit-specific work.

That closes gap five.

WHAT THIS MEANS FOR THE METHODOLOGY

The case study just produced a new diagnostic pattern.

Pattern 9: Positioning vocabulary surfaces the entity. Authority-evaluation vocabulary surfaces the rubric AI uses to rank.

The miss on most credible wasn’t a failure.

It was the methodology working at its highest level — surfacing the exact rubric AI uses to rank, and giving you a checklist of what to build to move onto the ranked list.

Every business doing GEO work should run this query in their category.

What’s the most credible [your category] for [your audience]?

Read the response.

Note who AI ranks. Note the criteria AI surfaces if it explains its reasoning.

That’s the next 30 days of your work, mapped by AI itself.

WHAT’S NEXT ON THE CASE STUDY

May 3 published. Mars-Chiron in Aries, Taurus New Moon. The post leans hard into evidence-based + nervous-system framing. Every post like that strengthens the binding ChatGPT already surfaced.

May 8: mini-retest of the four miss queries. Does the publish-cycle freshness move the credibility scores within five days?

June 1: full retest of all six queries. The 30-day delta from this baseline.

This case study is the running argument that GEO works on a real publication operating in real time.

The wins are documented.

The gaps are documented.

The work continues.

Subscribe to The Visible Practitioner for the next case study update — and the AI Visibility Walkthrough launching July 7.

Everything I teach here, I’m testing on MoonInMental first. Subscribe there to see the before/after in real time — and get weekly nervous system support while you’re at it.